AdvProb: Adversarial Probes to Test Confidence Robustness in Out-of-Distribution Detection

Jul 15, 2025·,·

1 min read

Philippe Bergna

Jake Thomas

AdvProb: Adversarial Probes to Test Confidence Robustness in Out-of-Distribution Detection

AdvProb: Adversarial Probes to Test Confidence Robustness in Out-of-Distribution DetectionAbstract

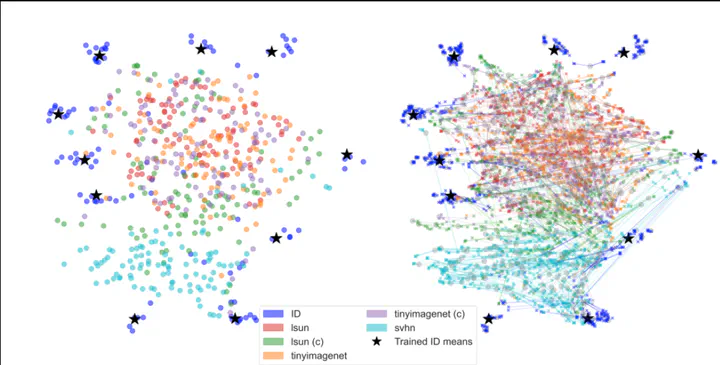

Neural networks frequently yield overconfident predictions when encountering out-of-distribution (OOD) samples, undermining their reliability in critical real-world tasks. In this paper, we introduce \textbf{AdvProb:} Adversarial Probes, a novel diagnostic framework for robust OOD detection. Our approach applies multiple targeted adversarial perturbations to each input, systematically probing the local stability of model predictions. By analyzing how model confidence shifts under these perturbations, we construct a comprehensive behavioral fingerprint for each input and train an XGBoost ensemble to robustly discriminate between in-distribution (ID) and OOD data. AdvProb substantially improves the OOD detection performance of standard classifiers and can be seamlessly integrated into existing methods like ODIN and Mahalanobis, yielding consistent performance gains across architectures and datasets. Our results highlight adversarial probes as a flexible and highly effective tool for enhancing OOD detection robustness.

Type

This work presents a novel OOD detection method that tests the stability of model confidence using adversarial probes optimized with diverse objectives.