Jail breaking LLMs

A small project were I redteam differetn LLMs models with type of attacks.

May 26, 2025

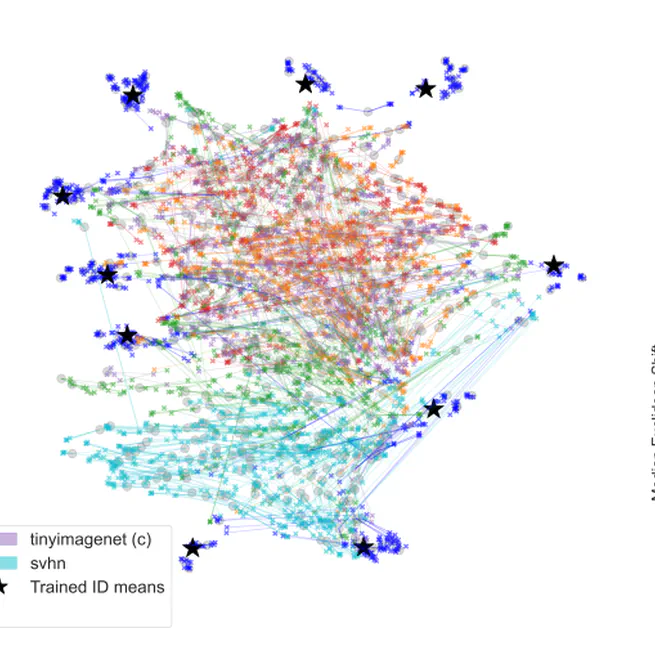

Out-of-Distribution (OOD) Detection with Adversarial Probes

Leveraging adversarial perturbations as diagnostic probes to enhance the performance of models on out-of-distribution (OOD) detection.

May 6, 2025

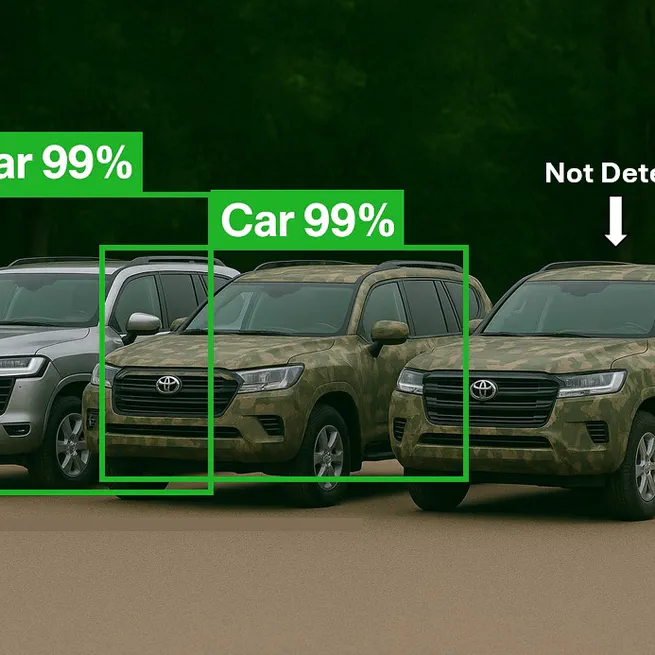

3D Adversarial Camouflage

A high-level overview of 3D physical adversarial attacks designed to fool AI object detection systems in real-world conditions.

Dec 21, 2024

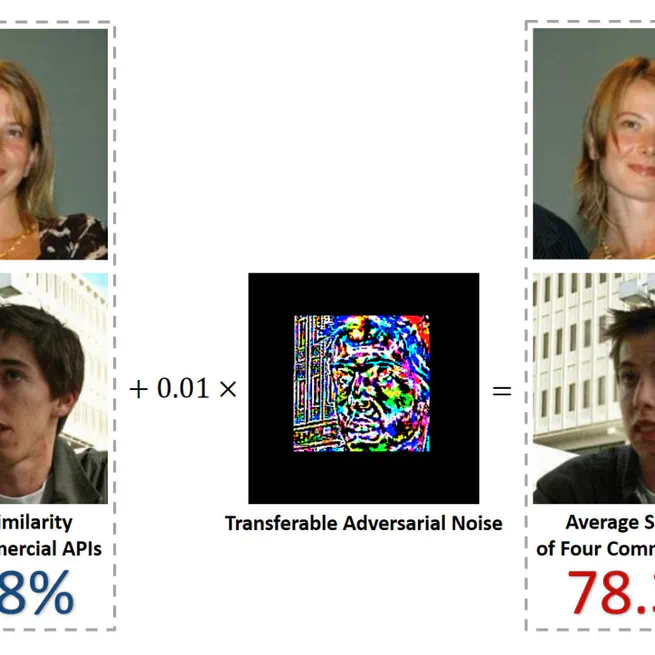

Adversarial Attacks for Facial Verification Systems

A high-level overview of black-box adversarial attacks against facial verification systems under realistic constraints.

Oct 26, 2023