AdvProb: Adversarial Probes to Test Confidence Robustness in Out-of-Distribution Detection

Using adversarial probes as a tool for testing the confidence stability of the model for out-of-distribution OOD detection.

Jul 15, 2025

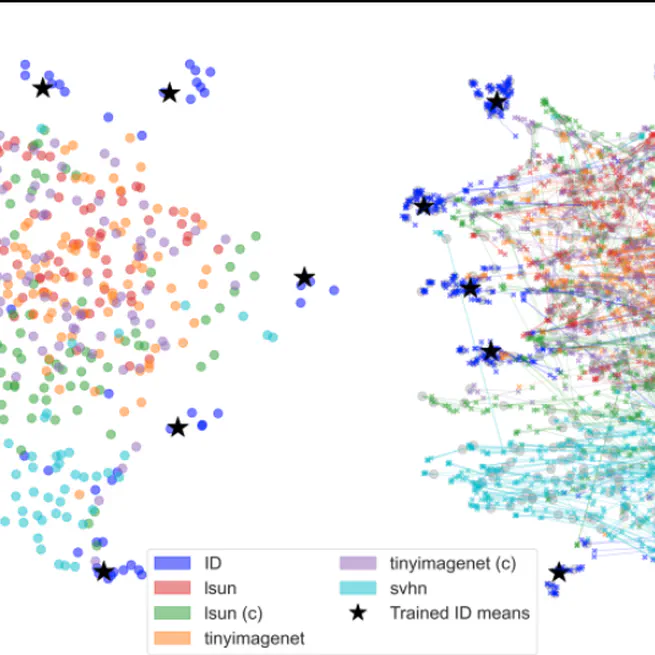

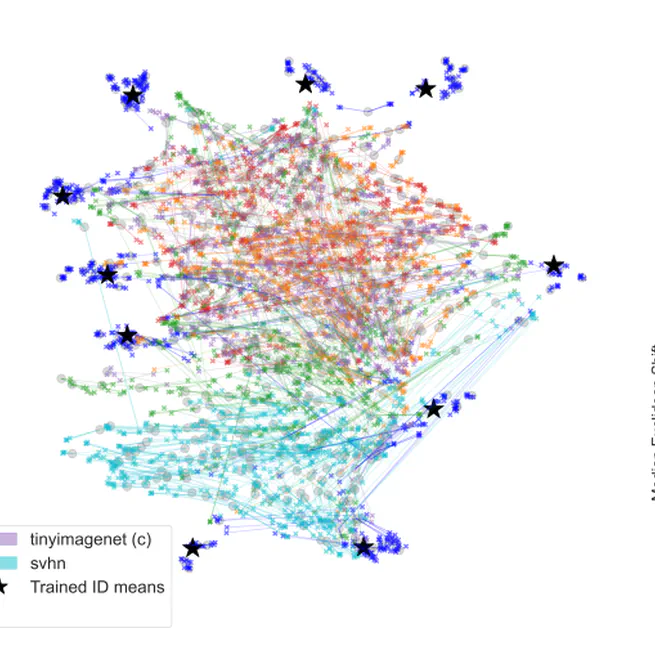

Out-of-Distribution (OOD) Detection with Adversarial Probes

Leveraging adversarial perturbations as diagnostic probes to enhance the performance of models on out-of-distribution (OOD) detection.

May 6, 2025

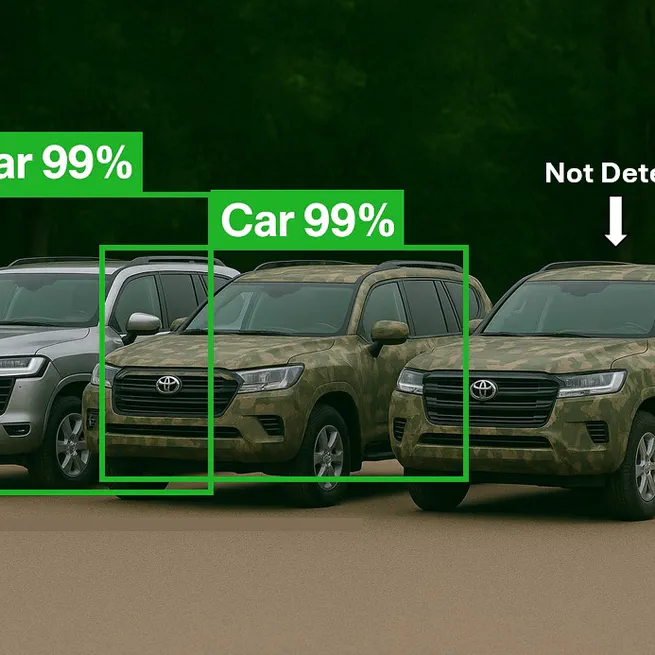

3D Adversarial Camouflage

A high-level overview of 3D physical adversarial attacks designed to fool AI object detection systems in real-world conditions.

Dec 21, 2024